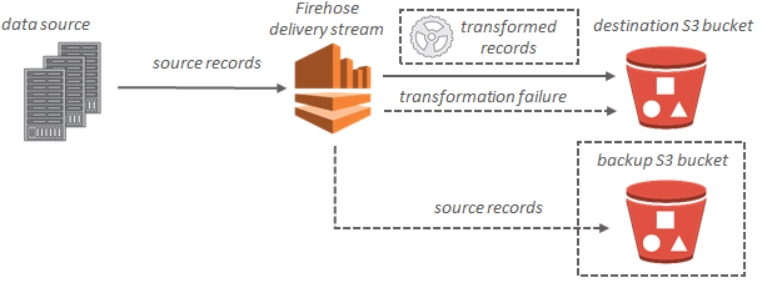

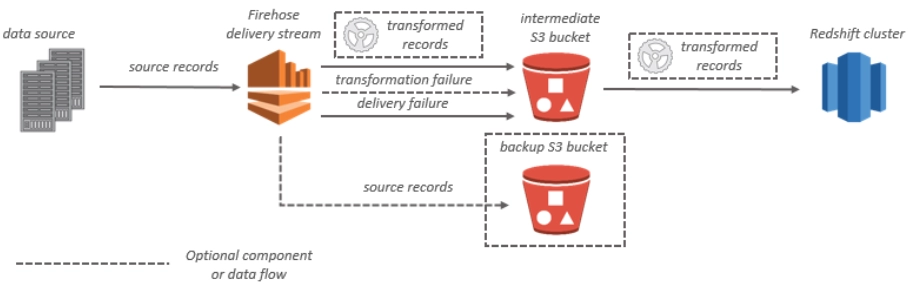

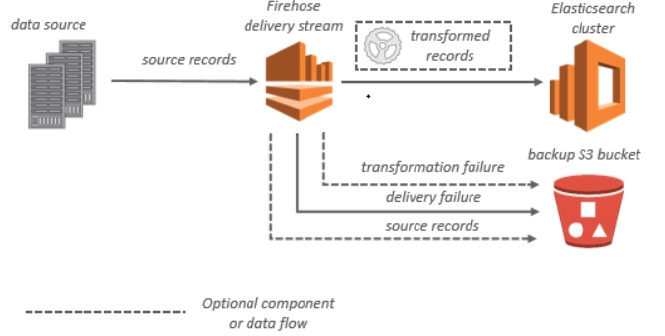

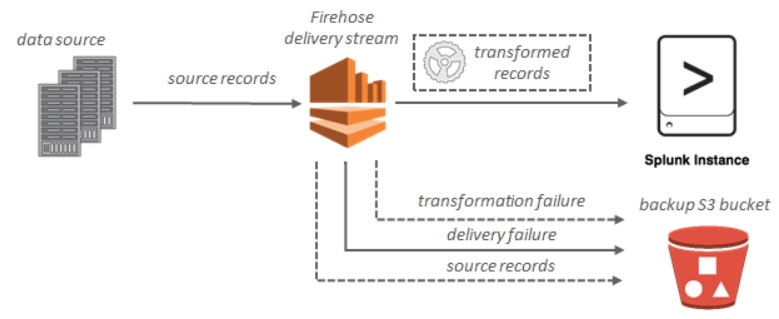

Data Flow

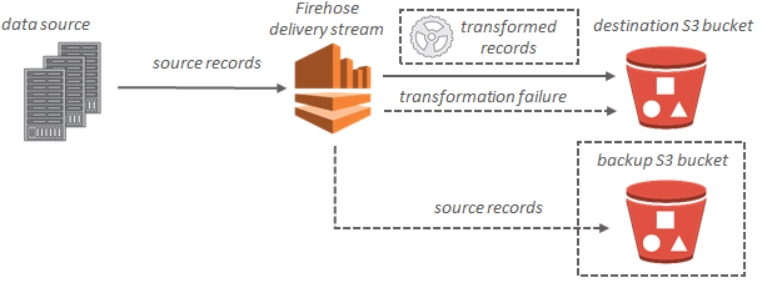

Streaming data is delivered to your S3 bucket for Amazon S3 destinations. If data transformation is enabled, you can back up source data to another Amazon S3 bucket.

Source: docs.aws.amazon.com

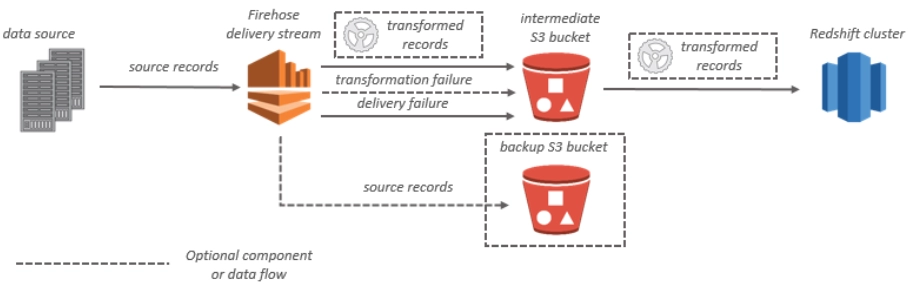

Streaming data is transmitted to your S3 bucket first for Amazon Redshift destinations. Kinesis Data Firehose then uses the Amazon Redshift COPY command to load data from your S3 bucket into your Amazon Redshift cluster. If data transformation is enabled, you can back up source data to another Amazon S3 bucket.

Source: docs.aws.amazon.com

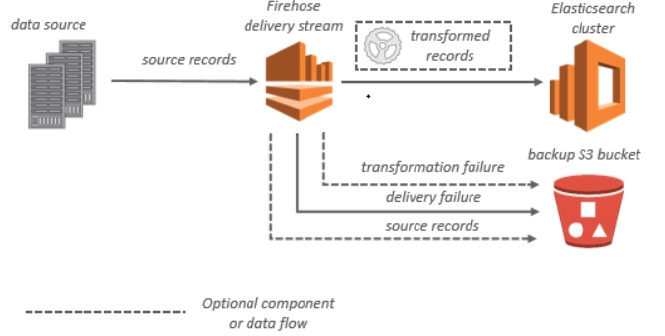

Streaming data is provided to your OpenSearch Service cluster and can optionally be backed up to your S3 bucket concurrently for OpenSearch Service destinations.

Source: docs.aws.amazon.com

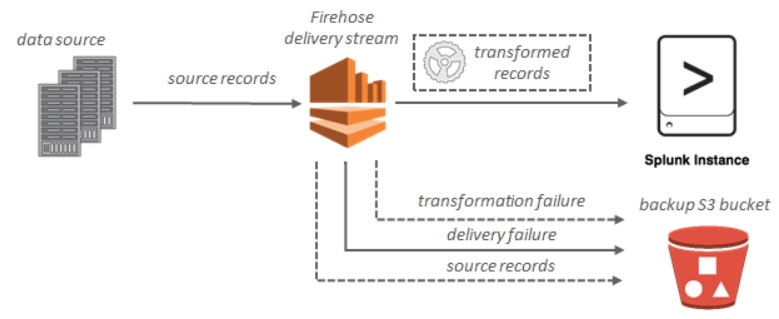

Streaming data is provided to Splunk and can optionally be backed up to your S3 bucket concurrently for Splunk destinations.

Source: docs.aws.amazon.com

Now, we will learn about creating an Amazon Kinesis Data Firehose Delivery Stream.

Creating an Amazon Kinesis Data Firehose Delivery Stream

Create a Kinesis Data Firehose delivery stream to your desired destination using the AWS Management Console or an AWS SDK.

You can use the Kinesis Data Firehose console or UpdateDestination to change the configuration of your delivery stream at any moment after it's been formed. While your configuration is modified, your Kinesis Data Firehose delivery stream remains ACTIVE, allowing you to continue sending data. It usually takes a few minutes for the modified settings to take effect. After you alter the configuration, the version number of a Kinesis Data Firehose delivery stream is increased by one. The name of the provided Amazon S3 object reflects this.

Security in Amazon Kinesis Data Firehose

At AWS, cloud security is a top focus. As an AWS customer, you'll have access to a data center and network architecture designed to fulfill the needs of the most security-conscious businesses.

Security is a shared responsibility between AWS and you.

Security of the cloud - AWS is in charge of safeguarding the infrastructure that runs AWS services in the AWS Cloud. AWS also supplies you with services that are safe to utilize. As part of the AWS compliance initiatives, third-party auditors test and verify the effectiveness of our security.

Security in the cloud – The AWS service you utilize determines your obligation. Other considerations include the sensitivity of your data, the requirements of your company, and applicable laws and regulations.

Let’s move on to Frequently asked questions.

Frequently Asked Questions

What is Big Data?

Big Data is data that is massive in volume and size. Big Data is a term used to describe a massive collection of data rising exponentially over time.

What are the characteristics of big data??

Big data has three characteristics: diversity, velocity, and volume. Diversity refers to the sources from which the data is received, and velocity refers to the rate of processing the data. Volume is referred to as the amount of data generated.

What is a Kinesis Data firehouse?

Kinesis Data Firehose is part of the Kinesis streaming data platform. In this, you don't need to create applications or manage resources using Kinesis Data Firehose.

What is the max size of the record in the Kinesis Data firehouse delivery stream?

A record can be up to 1,000 KB in size.

What is a data producer?

A data producer is, for example, a web server that transmits log data to a delivery stream.

Conclusion

In this article, we have extensively discussed the Amazon Kinesis Data Firehose. We start with a brief introduction and cover the key concepts, data flow and security in Amazon Kinesis Data Firehose.

After reading about the Amazon Kinesis Data Firehose, are you not feeling excited to read/explore more articles? Don't worry; Coding Ninjas has you covered. To learn, see What is Big data, Big Data Analytics, and Big Data Management Architecture.

Check out the Amazon Interview Experience to learn about Amazon’s hiring process.

Refer to our Guided Path on Coding Ninjas Studio to upskill yourself in Data Structures and Algorithms, Competitive Programming, JavaScript, System Design, and many more! If you want to test your competency in coding, you may check out the mock test series and participate in the contests hosted on Coding Ninjas Studio! But if you have just started your learning process and are looking for questions asked by tech giants like Amazon Hirepro, Microsoft, Uber, etc; you must look at the problems, interview experiences, and interview bundle for placement preparations.

Nevertheless, you may consider our paid courses to give your career an edge over others!

Do upvote our blogs if you find them helpful and engaging!

Happy Learning!