Approach

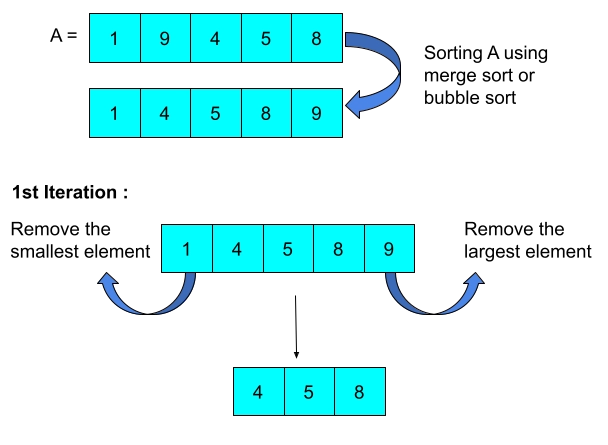

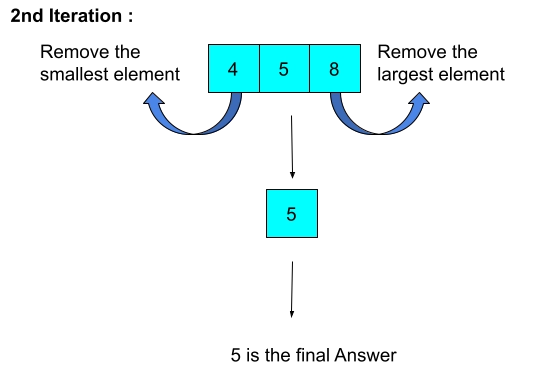

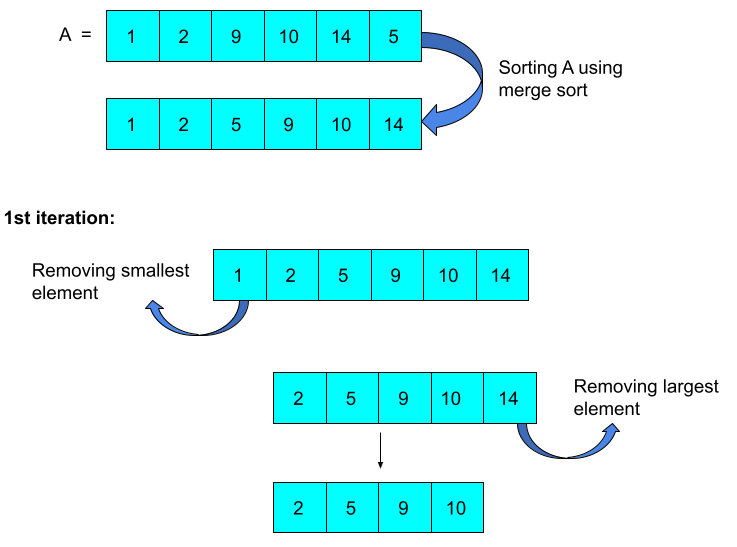

Let 'A' be the given array having N elements.

Observation 1: To maximize the last number that would be left, Alice will remove the smallest element from the array.

Observation 2: Similarly, Bob will remove the maximum element from the array to minimize the last element that would be left.

Observation 3: If N is greater than two, then in one step, we can remove the smallest and greatest element from the array, and if N is two, we will remove the smallest element because Alice makes the first move.

Brute Force Approach

We will iterate the array N/2 times. We find the minimum and maximum elements in each step and remove it.

We can find the smallest and greatest element in linear time complexity. So the overall time complexity is O(N^2). If N is 10^6, then in the worst case, the total number of operations is 10^12 . So this solution is inefficient.

Efficient Approach

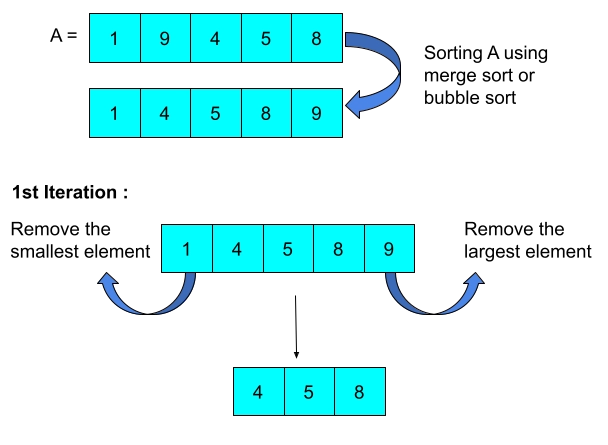

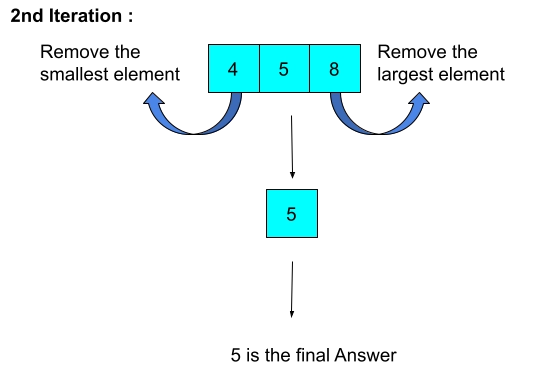

Observation 4: If we sort the array in non-decreasing order, then in each step, we can remove the first and the last element from the array. So in each step, the size of the given array decreases by two. Therefore, the answer exists in the middle of the array.

Now there are two cases:

- If N is odd (N%2 == 1): if the size of the array is odd and we repeatedly remove the first and last element from the array then, the last element that would remain is the element at the index N/2 (zero-based indexing).

- If N is even (N%2 == 1): if the size of the array is even and we repeatedly remove the first and last element from the array, then after (N - 1)/2 steps, two numbers will remain, one at index (N - 1)/2 and other at index N/2. As Alice makes the first move, the final answer is the element at index N/2.

Observation 5: The range of N is 0 to 10^5. So we can't use bubble sort, insertion sort, and selection sort to sort the given array because they work in O(N^2) time complexity, so in the worst case, the total number of operations goes up to 10^10, which is inefficient. We can use merge sort or quicksort as they work in O(NlogN) time complexity. We can also use the inbuilt Sorting functions.

Example

Case1 - N is odd

Case 2 - N is even

#include <bits/stdc++.h>

#define int long long

using namespace std;

//merge sort

void merge(int B[], int start, int mid, int end){

int lft[mid - start + 1];

int rgt[end - mid];

for(int i = start; i < mid + 1; i++){

lft[i - start] = B[i];

}

for(int i = mid + 1; i <= end; i++){

rgt[i - (mid + 1)] = B[i];

}

int lftIndex = 0, rgtIndex = 0, ind = start;

for( ; lftIndex <= mid - start && rgtIndex < end - mid; ind++){

if(lft[lftIndex] < rgt[rgtIndex]){

B[ind] = lft[lftIndex++];

}

else{

B[ind] = rgt[rgtIndex++];

}

}

while(lftIndex <= mid - start){

B[ind++] = lft[lftIndex++];

}

while(rgtIndex < end - mid){

B[ind++] = rgt[rgtIndex++];

}

}

void mergeSort(int B[], int start, int end){

if(end <= start)

return;

mergeSort(B, start, (start + end)/2);

mergeSort(B, ((start + end)/2) + 1, end);

merge(B, start, (start + end)/2, end);

}

signed main(){

int N;

cin >> N;

int A[N];

for(int i = 0; i < N; ++i)

cin >> A[i];

mergeSort(A, 0, N - 1); //sorting the given array using merge sort

//for both the cases (even and odd) the final answer is the element at index N/2

cout << A[N/2] << "\n";

}

Time Complexity

Merge sort takes O(N log N) time. So the overall time complexity is O(N log N).

Space Complexity

If we use merge sort, we need an extra array of size N. So, in that case, the space complexity is O(N). But, if we use quicksort with appropriate pivot, the space complexity can be reduced to O(logN).

Also Read - Selection Sort in C

FAQs

-

What is merge sort?

Merge sort is one of the most efficient sorting techniques. It follows divide and conquer strategy. It first divides the original array into N subarrays of size one each and then repeatedly merges two in sorted order.

-

What is the time complexity of merge sort?

The time complexity of merge sort is O(N logN).

-

What is quicksort?

Merge sort is one of the most efficient sorting techniques. It follows divide and conquer strategy. It first divides the original array into N subarrays of size one each and then repeatedly merges two in sorted order. The time complexity of quicksort is also O(N logN).

Key Takeaways

In this article, we solved a problem by using the sorting algorithms. Having a good grasp of sorting algorithms is very important to crack the coding interviews. Check out this coding ninjas blog on sorting algorithms for getting a better hold on it.

Recommended Readings:

To learn more about such data structures and algorithms, Coding Ninjas Studio is a one-stop destination. This platform will help you to improve your coding techniques and give you an overview of interview experiences in various product-based companies by providing Online Mock Test Series and many more benefits.

Happy learning!