Introduction

Lexical analysis is the first phase for the compiler, also known as a scanner. It takes the code from the modified language preprocessors written in sentences and breaks these sentences into tokens by removing comments, extra white spaces, etc., in the source code.

Refer to Lexical Analysis in Compiler Design for more detail on the topic.

This article will discuss previous years' gate questions on lexical analysis with proper solutions and explanations.

Gate Questions on Lexical Analysis

Let us now discuss the previously asked gate questions on Lexical Analysis.

1. The number of tokens in the given C statement is ______.

printf("pt = %d, &pt = %x", pt, &pt); [GATE 2000]

Solution: 10.

In a C program, the essential component recognized by the compiler is the "token". A token is a source-program content that the compiler does not break down into component elements. We have six C tokens: identifiers, constants, keywords, operators, string literals, and other separators. We have ten tokens within the above printf statement. Underneath are tokens in the above code:-

printf

(

"pt = %d, &pt = %x"

,

pt

,

&

pt

)

;

2. In any compiler, we can recognize keywords of a language during ____. [GATE CS 2011]

- Parsing of the program.

- The code generation.

- Lexical analysis of the program.

- Dataflow analysis.

Solution: (C).

Lexical analysis is the method of changing over a grouping of characters into an arrangement of tokens. A token can be a keyword.

3. Consider the following statements:

(1) The output of the lexical analyzer is groups of characters.

(2) Total tokens count in the printf("pt=%d, &pt=%x", pt, &pt); are 11.

(3) Symbol table can be implemented with an array and hash table but not a tree.

Which of the given statement(s) is/are correct? [GATE CS 2011]

- Only (1).

- Only (2) and (3).

- Only (1), (2), and (3).

- None of the above.

Solution: (D).

The correct statement is as follows

(1) The output of the lexical analyzer is tokens.

(2) Total tokens count in printf("pt=%d, &pt=%x", pt, &pt); are 10.

(3) We can implement a symbol table with an array, hash table, tree, and linked lists.

So, option (D) is correct.

4. Which one of the below statements is FALSE? [GATE CSE 2018]

- We can use Context-free grammar for specifying both lexical and syntax rules.

- We do type checking before parsing.

- We can translate high-level language programs to different Intermediate Representations.

- Function arguments can be passed with the program stack.

Solution: (B).

Type checking is done at the semantic analysis phase, and parsing is done at the syntax analysis phase. And we know the syntax analysis phase comes before semantic analysis. So Option (B) is False.

5. The lexical Analysis for any modern programming language such as Java needs the power of which one of the below machine models in a sufficient and necessary sense?

- Finite state Automata

- Non-deterministic automata

- Deterministic pushdown automata

- Turing machine

Solution: (A)

In the lexical analysis, finite automata are used to produce tokens in the form of keywords, identifiers, and constants from the input program. Pattern recognition is used to search keywords with string-matching algorithms.

6. The lexical analyzer uses the given patterns for recognizing three tokens, T1, T2, and T3, over the alphabets a,b,c. T1: a?(b∣c)*a & T2: b?(a∣c)*b & T3: c?(b∣a)*c. Note: 'x?' means 1 or 0 occurrences of the symbol x. Also, the analyzer outputs the token matching the longest possible prefix. If the analyzer processes the string "bbaacabc", which of the below is the sequence of tokens it outputs? [GATE CSE 2018]

- T1T2T3

- T1T1T3

- T2T1T3

- T3T3

Solution: (D).

We can rewrite the tokens as: T1 : (b+c)* a + a(b+c)* a and T2 : (a+c)* b + b(a+c)* b and T3 : (b+a)* c + c(b+a)* c.

The given String is "bbaacabc" the longest matching prefix is "bbaac" (That may be generated by T3). The remaining part "abc" can again be generated by T3. Hence, the answer is T3T3.

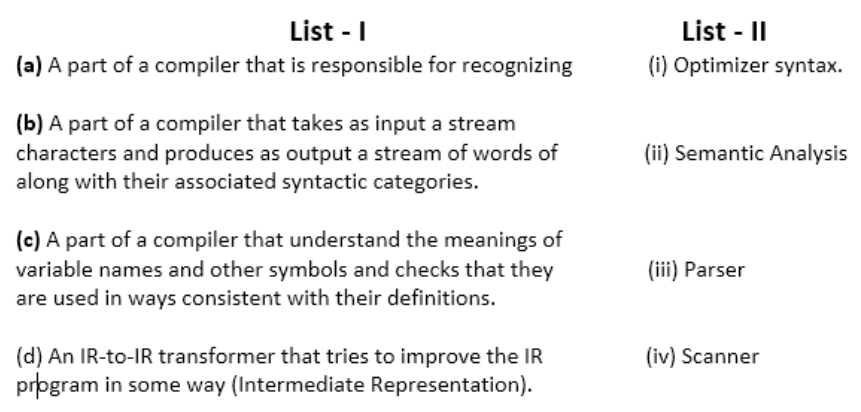

7. Match the description of various parts of a classic optimizing compiler in List - I, with their names in List - II:

- (a) - (iii), (b) - (iv), (c) - (ii), (d) - (i).

- (a) - (iv), (b) - (iii), (C) - (ii), (d) - (i).

- (a) - (ii), (b) - (iv), (C) - (i), (d) - (iii).

- (a) - (ii), (b) - (iv), (c) - (iii), (d) - (i).

Solution: (A).

- The parser is a part of the compiler and is responsible for syntax recognition.

- The lexical analyzer uses a scanner (or tokenization).

- In Semantic Analysis, consistency and definition of syntax are checked.

- An optimizer is used to improve the IR program.

Hence, option (A) is correct.

8. The output of any lexical analyzer is?

- A parse tree.

- Machine code.

- Intermediate code.

- A stream of tokens.

Solution: (D)

The Lexical Analysis produces a stream of tokens as output, consisting of identifiers, keywords, separators, operators, and literals.

9. Consider the given statements related to the compiler construction:

(1.) Lexical Analysis is specified by context-free grammar and implemented with pushdown Automata.

(2.) Syntax Analysis is defined by regular expressions and implemented with a finite-state machine.

Which of the above statement(s) is/are correct?

- Only (1)

- Only (2)

- Both (1) and (2)

- Neither (1) nor (2)

Solution: (D).

Option D is correct because:

Statement 1 is false because the lexical analysis phase is specified by regular expression and implemented by FA (Finite automata)

Statement 2 is false because syntax analysis is specified by context-free grammar and implemented by PDA (Pushdown Automata)

10. Which statement(s) regarding the linker software is/are true? (1) A function of the linker is to combine various object modules into a single load module. (2) A function of the linker is to replace absolute references in an object module with symbolic references to locations in other modules.

- Only (1)

- Only (2)

- Both (1) and (2)

- Neither (1) nor (2)

Solution: (A)

The linker is a computer program that combines two or more records produced by the compiler into a single executable form. Choice (1) is correct, but choice (2) doesn't take after the linker.

11. From the provided data: a b b a a b b a a b which one of the below is not a word in the dictionary created by LZ-coding (given the initial terms are a, b)?

- b a

- b b

- a b

- b a a b

Solution: B and D

Input is "a b b a a b b a a b". According to L-Z coding: The dictionary contains: a | b | ba | ab| baa (is already present) "baab" and "bb" are not there. So, options B and D are correct.

12. Debugger is a program that

- Allows to modify and examine the contents of registers.

- Does not allow execution of a part of a program

- Allows to set breakpoints, execute a part of the program, and display contents of the register.

- All of the above

Solution : (C).

A debugger is a program used by programmers to debug and test a target program. Debuggers can use instruction-set simulators rather than running a program directly on the processor to achieve higher control over its execution. It also allows to set breakpoints, execute a segment of the program and display the contents of the register. So, option (C) is correct.

This article has covered the previous year's gate questions on Lexical Analysis. Also, check out the Gate Syllabus for CSE.