Introduction

Before learning MO’s Algorithm, let us take a quick look at the prerequisites through which we can solve it.

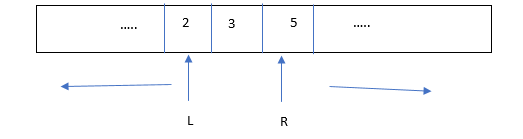

In competitive programming, we come across questions which involves queries mostly static queries which are given as vectors or arrays. Each such queries represent a run in the code. So, do you think running the code each time for every query operation is fine? Obviously, it is a brute force approach and it will fail most of the times in a coding platform as there will be a huge number of queries. So, what we can do? Can we always break up the array in two parts for like we do in Binary Search? Not really! And nor it will be beneficial.

Let us consider a particular part of the array may be some xth part of the array size. We divide the array into x parts to reduce our time complexity. By using complex mathematical calculations – differentiation we found out that this x is nothing but, where n is the size of the array. We come to the conclusion that dividing the array into blocks of elements help to reduce the time complexity significantly.

This very technique is called Square root Decomposition (Don’t worry we will need this in Mo’s Algorithm).

Square Root Decomposition

In this technique, we divide the array into blocks of √n elements. When we process a query from l to r, the idea is to store the pre-processed answer in the blocks array so when we get a block in a range of l to r we do not iterate through all the √n elements of the block rather we get the value from block array in O(1) time. This method reduces the time complexity by √n . Let us see this with an example of Range Sum Query.

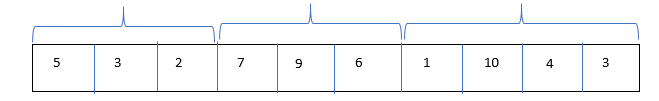

Array Given

There are 10 elements in the array hence n = 10, now we divide this array into blocks of 3 elements each and 4 elements in the last block.

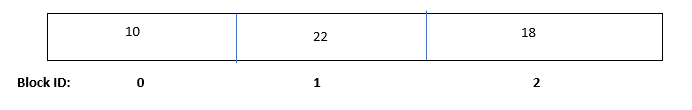

Our block array will look like:

Block Ids are given by the formulae:

Here remainder 0 refers to starting of new block.

The idea is to pre-process the array and store the sum of each blocks in blocks array with the above shown block ids. This helps when we process a query from l to r, if the range includes some blocks, we directly get their sum from blocks array in O(sqrt(n)) time.

Let us see the code implementation of range sum of Square Root Decomposition:

#include<bits/stdc++.h>

using namespace std;

vector v(0);

int block_sum[1000]{0};

int blk_size; root n block sizes

//update operation

void update(int idx,int val){

int block_idx = idx/blk_size; // Gives the block ids

block_sum[block_idx] = block_sum[block_idx] + val – v[idx];

v[idx] = val;

}

//Query operation

int querry(int l,int r){

int sum = 0;

//case1

while(l<r && l%blk_size!=0 && l!=0){

sum+=v[l];

l++;

}

//case2

while(l+blk_size<=r){

sum +=block_sum[l/blk_size];

l+=blk_size;

}

//case3

while(l<=r){

sum +=v[l];

l++;

}

return sum;

}

void preprocess(int n){

int blk_idx = -1;

for(int i = 0;i<n;i++){

if(i%blk_size==0)

blk_idx++;

block_sum[blk_idx]+=v[i];

}

}

void printblock(){

for(int i = 0;i<blk_size;i++)

cout<<i<<” “<<block_sum[i]<<endl;

}

int main() { //Driver Program

int n; cin>>n;

blk_size = sqrt(n);

v.clear();

for(int i = 0;i>num;

v.push_back(num);

} preprocess(n);

printblock();

update(4,11);

printblock();

return 0;

}