What are Tensors?

Tensors are containers with N dimensions. We can store data in tensors the same way as Numpy arrays. A tensor can have any number of dimensions. A one-dimensional tensor will be a vector of data, and a two-dimensional tensor will be a matrix, a three-dimensional tensor will be a cube, and so on.

Operating on Tensors using PyTorch Functions

torch.tensor()

We use this function to create a new tensor with the given data.

# In arguments, we have given the list data and datatype

tensor = torch.tensor(data=[[1,20],[2,19],[3,18],[4,17],[5,16],[6,15],[7,14],[8,13],[9,12],[10, 11]], dtype=torch.int64)

print(tensor)

You can also try this code with Online Python Compiler

torch.from_numpy()

If we already have a NumPy array, we can use this function to create a tensor with the same data and dimensions as the array.

numpy_array = np.array([[1, 2, 9, 10], [3, 4, 5, 6], [7, 8, 13, 14], [11, 12, 15, 16]])

tensor = torch.from_numpy(numpy_array)

print(tensor)

You can also try this code with Online Python Compiler

torch.linspace()

We use linspace to create a tensor with random numbers between the start and the end value. The number of steps specifies the number of data points.

tensor1 = torch.linspace(start = 10, end = 40, steps = 20)

tensor2 = torch.linspace(start = 1, end = 10, steps = 5)

print("Tensor 1: ", tensor1, "\n")

print("Tensor 2: ", tensor2)

You can also try this code with Online Python Compiler

torch.eye()

We use the eye() function to create a diagonal matrix (of n*m shape) with the diagonal elements as 0 and the rest as 1.

# If only one value is given, then n and m will have the same value, and a square tensor will be created

tensor1 = torch.eye(5)

tensor2 = torch.eye(n = 5, m = 10)

print("Tensor 1: \n", tensor1, "\n")

print("Tensor 2: \n", tensor2)

You can also try this code with Online Python Compiler

torch.full()

We use this function to create a tensor of the desired shape and if we want all the values to be the same.

tensor = torch.full(size = (4, 6), fill_value = 28)

print(tensor)

You can also try this code with Online Python Compiler

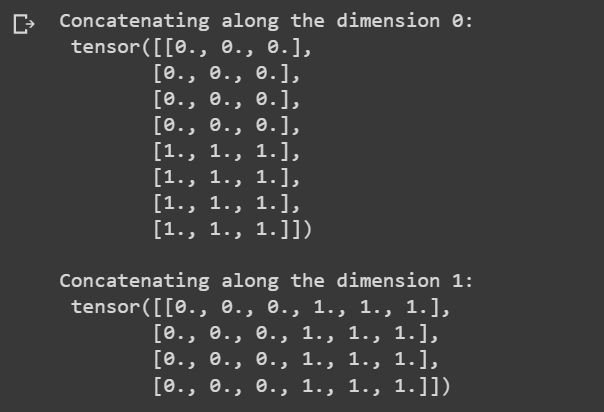

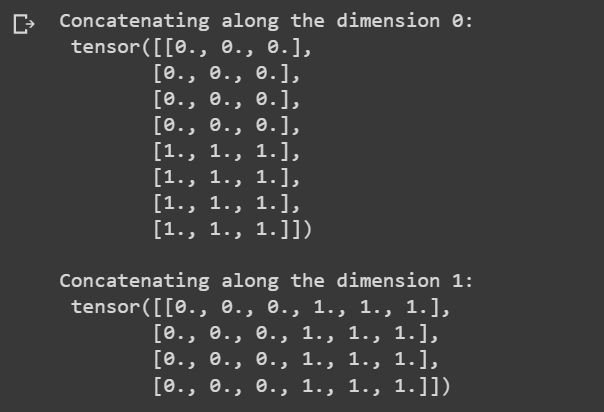

torch.cat()

We can use the cat() function to concatenate two or more tensors based on some dimension.

tensor1 = torch.zeros(4, 3) # creates a tensor of shape 4x3 and all zeros

tensor2 = torch.ones(4, 3) # creates a tensor of shape 4x3 and all ones

concat_tensor1 = torch.cat((tensor1, tensor2))

print("Concatenating along the dimension 0: \n", concat_tensor1, "\n")

concat_tensor2 = torch.cat((tensor1, tensor2), dim=1)

print("Concatenating along the dimension 1: \n", concat_tensor2)

You can also try this code with Online Python Compiler

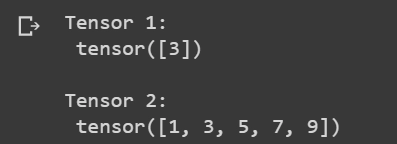

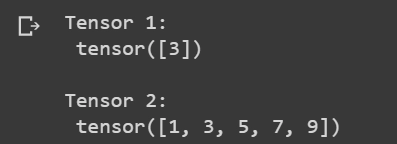

torch.take()

We can create a one-dimensional tensor from specific values of some other tensor. The take() function creates a one-dimensional tensor with the values at the specific indexes of the other tensor.

original_tensor = torch.tensor([1, 2, 3, 4, 5, 6, 7, 8, 9, 10])

tensor1 = torch.take(original_tensor, torch.tensor([2]))

tensor2 = torch.take(original_tensor, torch.tensor([0, 2, 4, 6, 8]))

print("Tensor 1: \n", tensor1, "\n")

print("Tensor 2: \n", tensor2)

You can also try this code with Online Python Compiler

torch.rand()

This function creates a tensor of the provided shape with random values ranging from 0 to 1.

tensor = torch.rand((4, 6))

print(tensor)

You can also try this code with Online Python Compiler

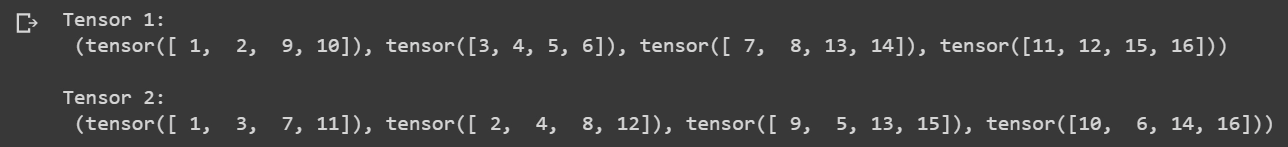

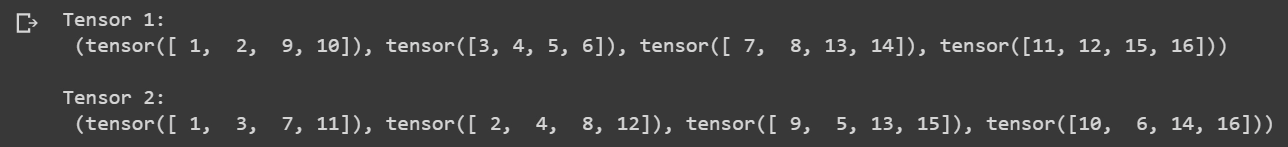

torch.unbind()

The unbind() functions unbind or remove a tensor along the dimension specified.

original_tensor = torch.tensor([[1, 2, 9, 10], [3, 4, 5, 6], [7, 8, 13, 14], [11, 12, 15, 16]])

tensor1 = torch.unbind(original_tensor, dim=0)

tensor2 = torch.unbind(original_tensor, dim=1)

print("Tensor 1: \n", tensor1, "\n")

print("Tensor 2: \n", tensor2)

You can also try this code with Online Python Compiler

Arithmetic operations on Tensors

Addition of two tensors

We can easily add two same dimensional tensors using the “+” operand.

# Addition

tensor1 = torch.tensor([[1, 2, 9, 10], [3, 4, 5, 6], [7, 8, 13, 14], [11, 12, 15, 16]])

tensor2 = torch.tensor([[19, 18, 11, 10], [17, 16, 15, 14], [13, 12, 7, 6], [9, 8, 5, 4]])

tensor = tensor1 + tensor2

print(tensor1, "\n\t\t + \n", tensor2, "\n\t\t = \n", tensor)

You can also try this code with Online Python Compiler

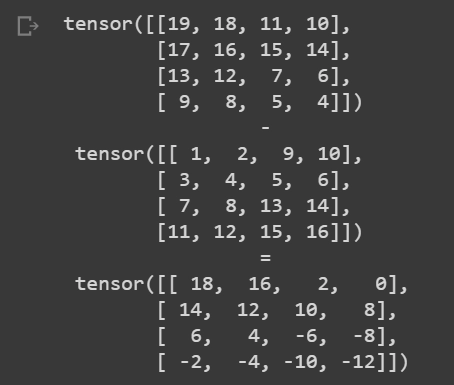

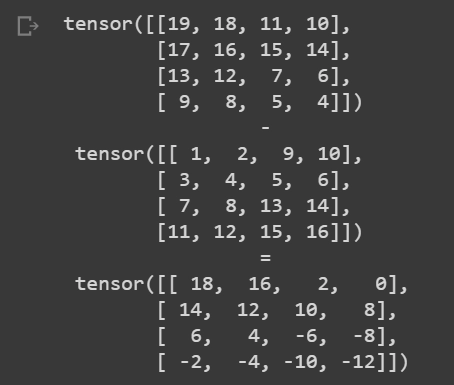

Subtraction of two tensors

We can either use the “-” operand or call the sub() function from the PyTorch library for subtraction.

# Subtraction

tensor1 = torch.tensor([[1, 2, 9, 10], [3, 4, 5, 6], [7, 8, 13, 14], [11, 12, 15, 16]])

tensor2 = torch.tensor([[19, 18, 11, 10], [17, 16, 15, 14], [13, 12, 7, 6], [9, 8, 5, 4]])

sub_tensor1 = tensor2 - tensor1 # METHOD 1

sub_tensor2 = torch.sub(tensor2, tensor1) # METHOD 2

print(tensor2, "\n\t\t - \n", tensor1, "\n\t\t = \n", sub_tensor2)

You can also try this code with Online Python Compiler

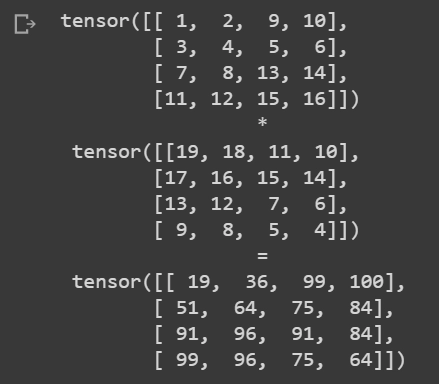

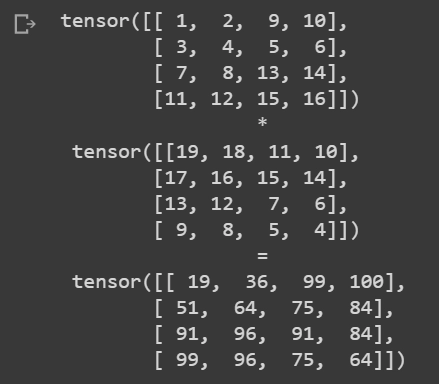

Multiplication of two tensors

We can use the multiplication operand “*” to perform the multiplication or use the mul() function from the PyTorch library.

# Multiplication

tensor1 = torch.tensor([[1, 2, 9, 10], [3, 4, 5, 6], [7, 8, 13, 14], [11, 12, 15, 16]])

tensor2 = torch.tensor([[19, 18, 11, 10], [17, 16, 15, 14], [13, 12, 7, 6], [9, 8, 5, 4]])

mul_tensor1 = tensor1 * tensor2

print(tensor1, "\n\t\t * \n", tensor2, "\n\t\t = \n", mul_tensor1)

You can also try this code with Online Python Compiler

tensor = torch.tensor([[1, 2], [3, 4]])

mul_tensor = torch.mul(tensor, tensor)

print("The square tensor of \n", tensor, "\n\t is \n", mul_tensor)

You can also try this code with Online Python Compiler

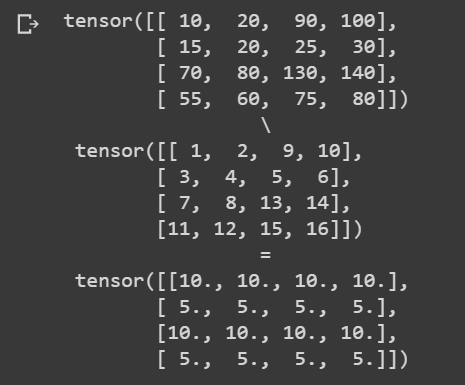

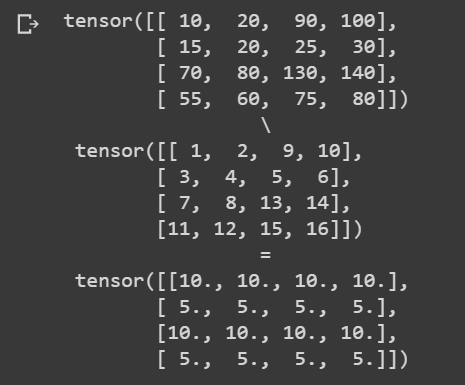

Division of two tensors

Same as the multiplication, we can also perform the division operation in two ways, either by using the division operand “\” or by utilizing the div() function from the PyTorch library.

# Division

tensor1 = torch.tensor([[1, 2, 9, 10], [3, 4, 5, 6], [7, 8, 13, 14], [11, 12, 15, 16]])

tensor2 = torch.tensor([[10, 20, 90, 100], [15, 20, 25, 30], [70, 80, 130, 140], [55, 60, 75, 80]])

div_tensor = torch.div(tensor2, tensor1)

print(tensor2, "\n\t\t \ \n", tensor1, "\n\t\t = \n", div_tensor)

You can also try this code with Online Python Compiler

tensor1 = torch.tensor([[1, 2]])

tensor2 = torch.tensor([[5, 6], [9, 8]])

div_tensor = tensor2 / tensor1

print(tensor2, "\n\t \ \n", tensor1, "\n\t = \n", div_tensor)

You can also try this code with Online Python Compiler

Frequently Asked Questions

Q1. What are Tensors?

Ans. Tensors are containers with N dimensions. We can store data in tensors the same way as Numpy arrays. A tensor can have any number of dimensions. A one-dimensional tensor will be a vector of data, and a two-dimensional tensor will be a matrix, a three-dimensional tensor will be a cube, and so on.

Q2. What makes PyTorch different from other ML Libraries?

Ans. The following factors make PyTorch better than other ML libraries:

- It offers dynamic computation graphs.

- It can make use of standard Python flow control.

- It ensures dynamic inspection of Variables and Gradients.

Q3. How can we increase the size of a tensor?

Ans. We can expand a tensor using the torch.expand() function. The size will be increased to the shape provided in the parameter.

Q4. Can we convert a tensor to a list?

Ans. Yes. We can convert a tensor to a python list by using tolist() function available in the PyTorch library.

Key Takeaways

Congratulations on making it to the end of the blog. If you want to read the basics of the PyTorch, check out this blog.

Check out this link if you are a Machine Learning enthusiast or want to brush up your knowledge with ML blogs.

If you are preparing for the upcoming Campus Placements, don't worry. Coding Ninjas has your back. Visit this link for a carefully crafted and designed course on-campus placements and interview preparation.