Introduction

Singular Value Decomposition(SVD) is simply the factorization of the matrix. A given matrix is broken down into three matrices such that their dot product is equal to the original matrix. SVD is the most widely used method for matrix decomposition. SVD has its application in data reduction, data compression, etc. Furthermore, we will discuss its mathematical method, code, and an example.

Mathematical Equation

Let us consider a matrix A of dimension m×n. As shown below, we can break matrix A into three matrices U, Σ, and V.

A = U . Σ . VT

(VT represents the transpose of the matrix V)

Here the dimensions of U are m×m, the dimensions of Σ are m×n, and the dimensions of V are n×n.

Matrix U is also called the left singular vectors of matrix A, and V is called the right singular vectors of matrix A. The Σ is known as the sigma matrix. The sigma matrix is the diagonal matrix.

- U is a matrix of the orthonormal eigenvectors of AAT.

- VT is a matrix of the orthonormal eigenvectors of ATA.

- Σ is matrix having singular values, which are the square roots of the eigenvalues of ATA.

Example

Let us under SVD by an example.

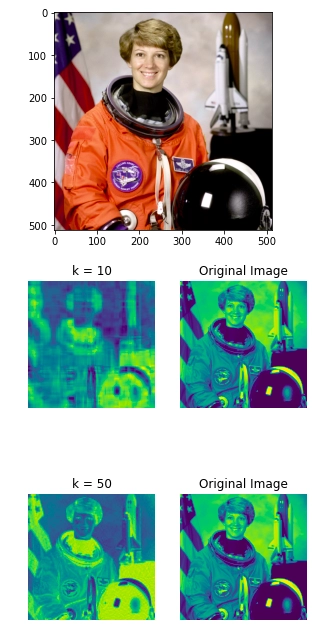

Consider a matrix A find SVD of the matrix A.

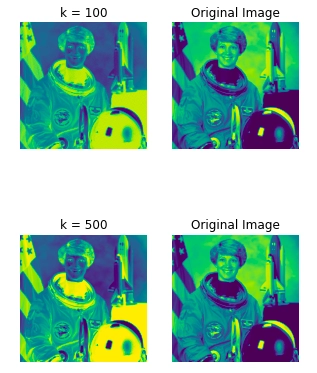

The first step for finding the SVD is to calculate the singular values 𝜎i by finding eigenvalues of AAT.

AAT =

The characteristics polynomial will be:

AAT - λI= 0

Therefore, we will get:

λ2 - 34λ + 225 = 0

Eigen values will be 9, 25.

Singular values = sqrt(9), sqrt(25) i.e, 3, 5.

𝜎1 = 5, 𝜎2 = 3.

Furthermore, we will find the right singular vectors by finding an orthonormal set of eigenvectors of ATA. The eigenvalues will be 0, 9, 25. As ATA is symmetric, the eigen vectors will be orthogonal.

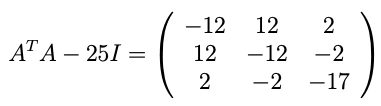

For λ = 25, we have

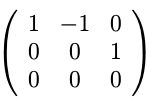

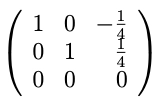

which is row-reduced to:

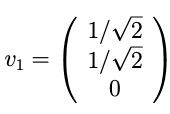

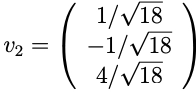

A unit length vector in that direction is:

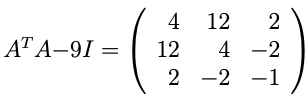

Similarly, we will calculate the unit length vector for λ = 9.

which is row-reduced to:

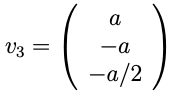

A unit length vector in that direction is:

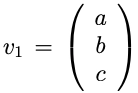

For the last eigenvector, we can find the unit vector perpendicular to v1 and v2.

v1T.v3. = 0

v2T.v3. = 0

Solving the equations we get.

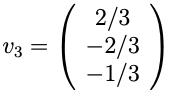

Therefore, v3 equals to:

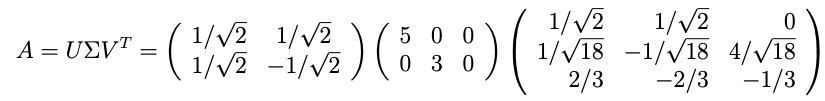

Till now, we know sigma and VT.

We can calculate U by using the following formula ui = 1/σ(Avi)

Hence matrix A is factorized into three matrices.