Why do we need Language Models?

We need language models to understand human language to use it to do various tasks that make our lives easier. For example, the automatic suggestion of the next word in a sentence while typing can save us time.

Language models are used in many places like Machine Translation, and if we want to convert a text from one language to another, we can have multiple options. For example, if two options are I like mangoes and mangoes like I, we know that I like mangoes sound correct, so models are used to calculate the probability of which option fits best.

Similarly, we can use language models for text summarization, information retrieval, optical character recognition, etc.

Types of Language Models

There are majorly two types of language models:

- Statistical Language Models

- Neural Language Models

Let’s dive deeper into the types of language models!

-

Statistical Language Models

These models utilize conventional statistical methods like n-grams, Hidden Markov Models, and linguistic features to analyze and learn the probabilistic distribution of words. Their types are

-

N-grams

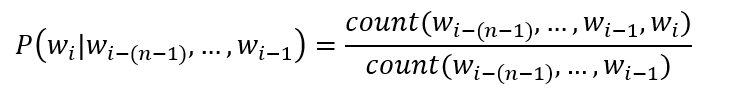

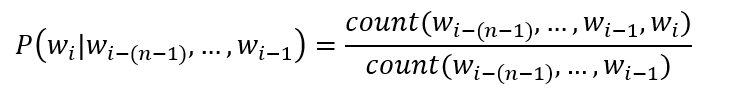

This is the simplest language model. Here we learn the probabilistic distribution of N-words where N can be any number based on which we have different types. For example, if N equals 1, we call it unigram, 2 for bigrams, 3 for trigrams, and so on. One example for bigram is “It is”, so we have two words similarly. For trigrams, we will have three words. In general, for an n-gram model, the conditional probability for word w at position i is given by

Where n equals the total number of words in the sentence.

-

Bidirectional

Here we analyze the text in both directions i.e. forward and backward, unlike N-grams where we only analyze backward. This increases the accuracy of the prediction of words.

-

Exponential

This model uses an equation to evaluate, using both n-grams and feature functions specified before. The model stands on the entropy principle, meaning that probability distribution with the highest entropy is the best option. These models can result in more accurate results as these models have fewer statistical assumptions.

-

Continuous space

Here we have words which are non-linear combination of weights present in a neural network. This is useful in case of large word set, when we have unique words.

Exponential and continuous space models can perform better than n-gram models to better care for language variation and ambiguity.

With increasing number of words, the patterns to predict next word becomes weaker as the possible word sequence length increases. To tackle this we use Neural Language Models.

-

Neural Language Models

These language models are based on neural networks and are an advanced approach to solving NLP problems. As they are not dependent on probabilities, they can handle longer text relations.

Examples can be Recurrent Neural Network(RNN), Gated recurrent Unit(GRU), etc.

FAQs

1. What are language models?

Language models are statistical models which learn and predict the probability distribution of a sequence of words.

2. What are the applications of language models?

Language models have multiple applications like handwriting recognition, text summarization, machine translation, etc.

3. What is a statistical language model in NLP?

Statistical language models use conventional statistical methods like N-grams, Hidden Markov Model, etc., to analyze and predict the probability distribution of words.

4. What is the goal of a language model?

The goal of Language Models is to predict the probability distribution of words.

5. What are the types of language models?

There are primarily two types, namely, Statistical Language Models like N-grams, etc. and Neural Language Models.

Key Takeaways

This article discussed language models their uses, and explored the different types of language models.

We hope this blog has helped you enhance your knowledge regarding Language Models and if you would like to learn more, check out our free content on NLP and more unique courses. Do upvote our blog to help other ninjas grow.

Happy Coding!