Introduction

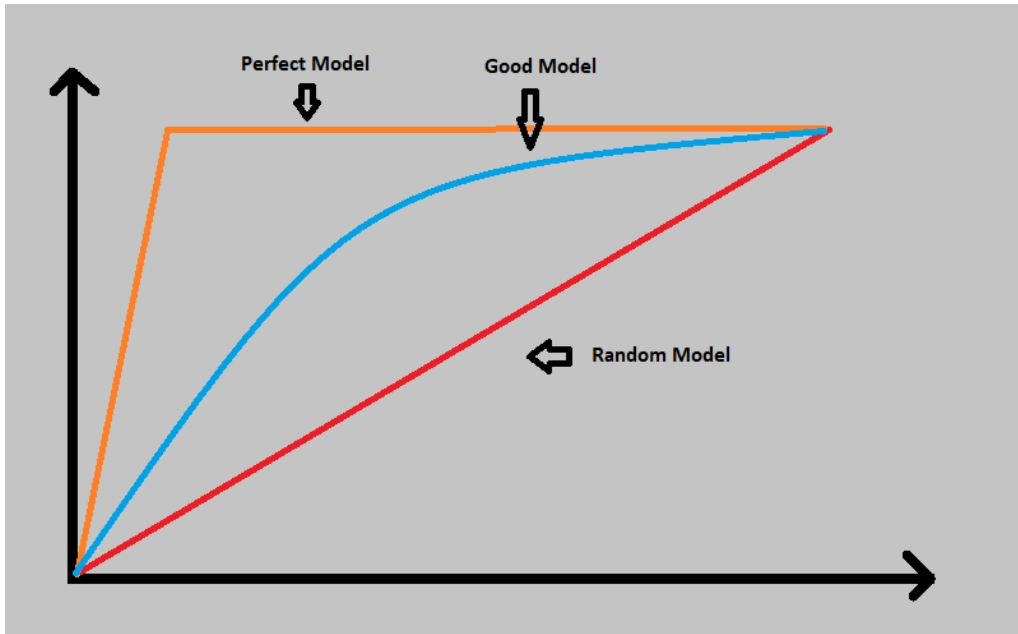

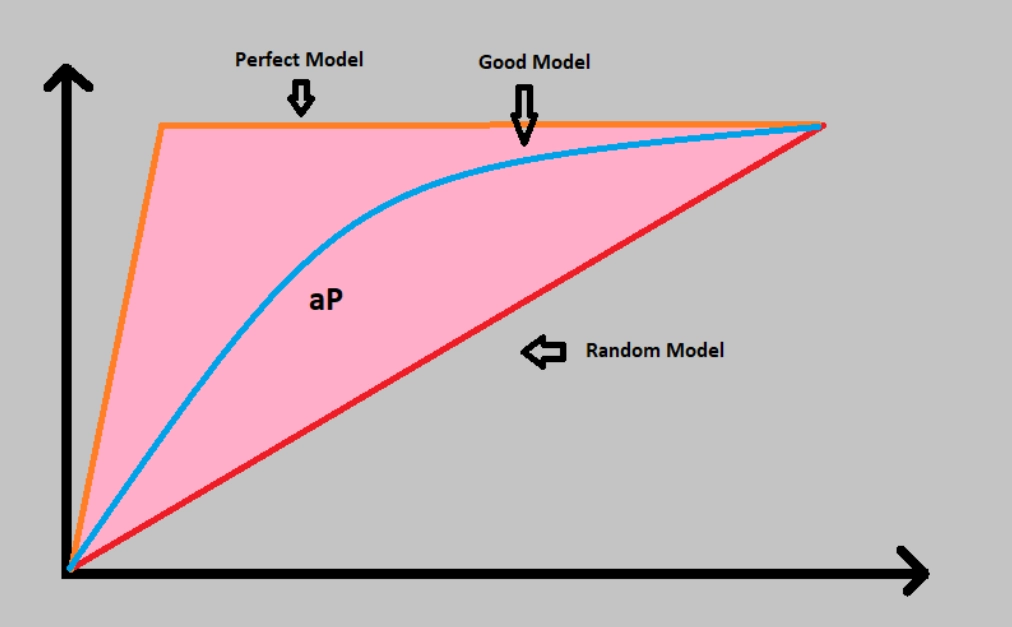

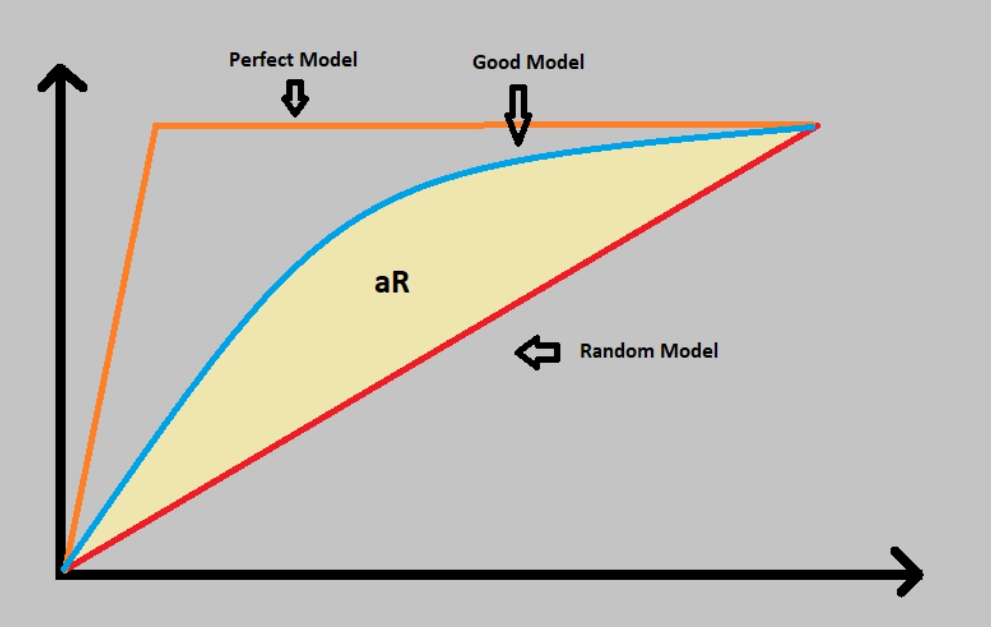

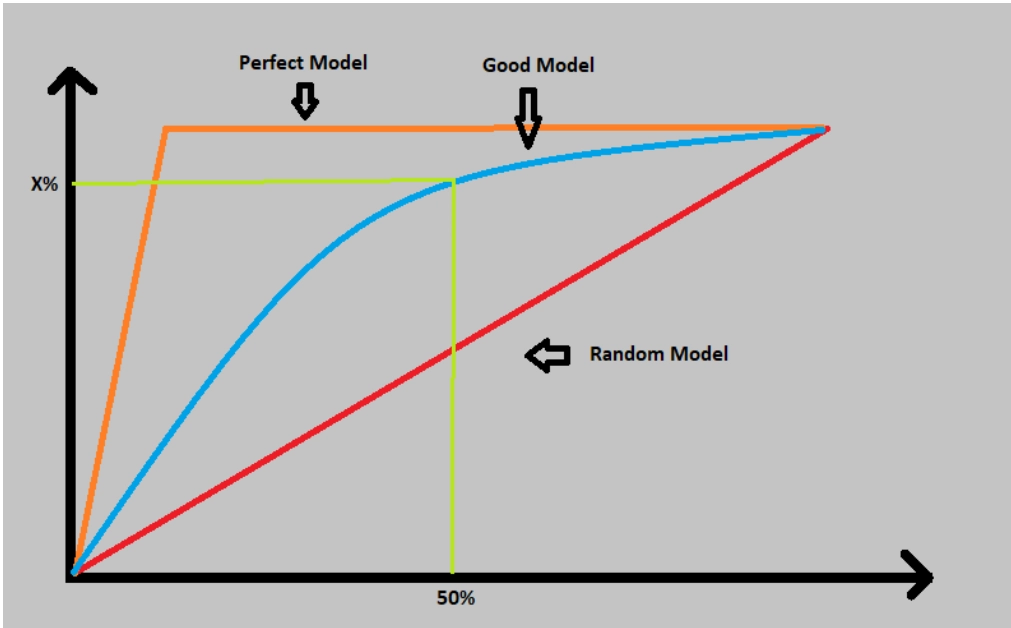

Performance measurement plays an important role in any Machine Learning project. It is very important to keep a record of how good or bad the model is. For regression models, we prefer using R2( R square) or the RMS(Root Mean Square) method. But on classification-based models, we prefer using the AUC-ROC curve or CAP curve. This method are preferred for using multi-class classification issues. In this article, we will discuss the classification-based model curves. Let’s get started

.

Source: Giphy

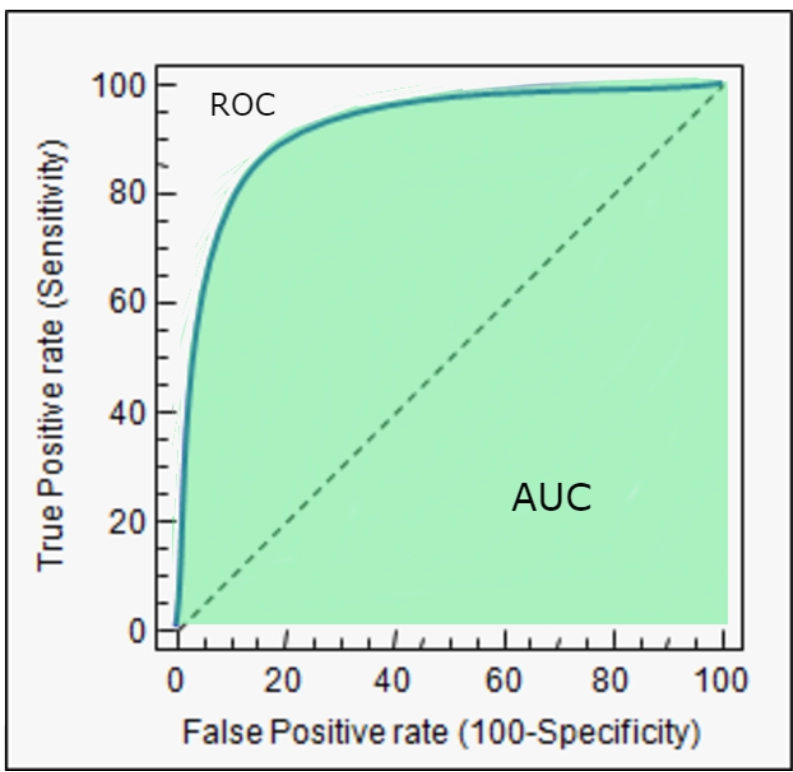

AUC-ROC curve

AUC-ROC refers to the Area under the curve(AUC) and receiver operating characteristics(ROC) curve. This Area under Reciever operating characteristics curve gives a measurement of various threshold levels. It is considered one of the important evaluation measures for binary classification problems. ROC gives a probability curve based on the true positive rate(TPR) against the false positive rate(FPR). And AUC plots the measure of separability of how well our model can distinguish between classes. The greater the AUC value, the good the model will be.

source: link

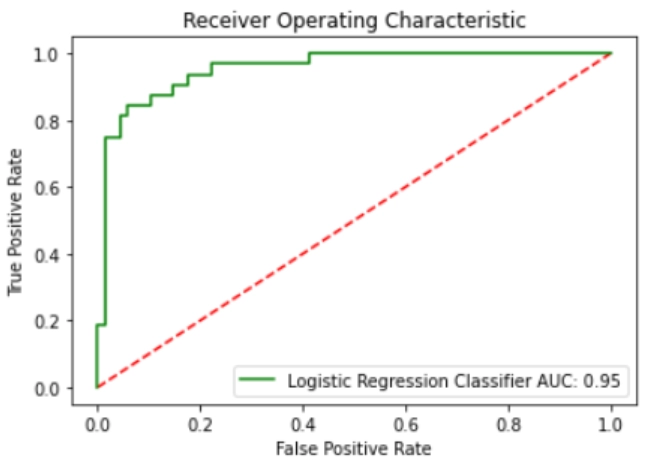

ROC curve

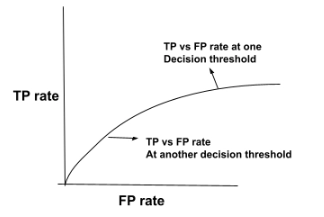

ROC curve stands for receiver operating characteristic curve. This graph shows the performance of the classification model at all the classification thresholds. The graph plots using two parameters:

- True positive rate(TPR)

- False-positive rate(FPR)

A ROC curve is able to plot between TPR and FPR at different classification thresholds. In lowering the classification thresholds, more items are identified as positive and thus helps in increasing both false and true positive rates. Now, let’s look at the figure showing TP vs FP rate at various classification thresholds.

For evaluating the points in ROC curve, a logistic regression model is evaluated many times with different classification thresholds. But the process is very inefficient. To overcome this hit and trial method, a efficient sorting algorithm was developed known as AUC. now, let’s see about this AUC in more detail.

AUC

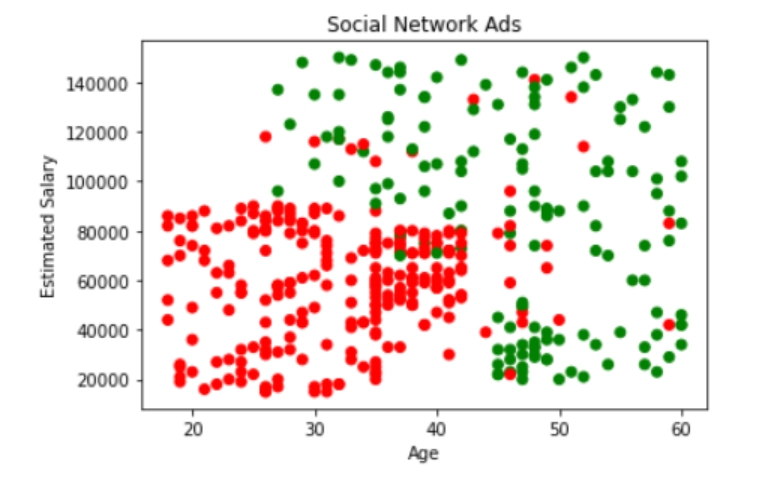

AUC curve stands for area under the ROC curve. In other words, the entire area covered under the ROC is depicted by the AUC. An aggregate measure of performance is also provided by AUC across all possible classification thresholds. One of the easiest way to determine the probability using AUC for random positive example more highly than the random negative example. Now, let’s go through an example of dataset values arranged in ascending order of left to right.

Source: link

The figure shown above shows all the predictions which are ranked in ascending order for logistic regression score. The random positive(green) examples are placed in the right of random negative ones(red). The range of AUC lies between 0 to 1. If any AUC predictions are 0.0 then their predictions are 100% wrong. And if the predictions are 100% correct, then the prediction is 1.0. AUC is very desirable because AUC can measure and tell how precisely the predictions are ranked irrespective of their absolute values and classification threshold chosen.