Introduction

A data engineer usually pulls data from several sources, cleans it, and aggregates it. Many times, large volumes of data need to be analyzed with these methods. The topic of this article consists of the study of parallel programming, a fundamental concept in Computing and in Data Engineering specifically, allowing applications to deal with enormous amounts of data in a relatively short period of time.

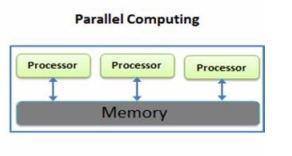

Parallel computing involves splitting a large problem up into smaller ones, which are then carried out individually by one single processor. Furthermore, these processes are simultaneously carried out in a distributed, parallel fashion.

Serial computing is the old-school method for completing one task at a time with a single processor. Parallel computing executes multiple tasks at once. The parallel architecture allows tasks to be divided into parts and multi-tasked, as opposed to serial architecture. Modelling and simulating real-world events is a particular strength of parallel computing systems.

How does it Work?

Parallel computing infrastructure is commonly housed in a single data centre with multiple processors, with computation requests distributed across several servers in small chunks and processed simultaneously on each.

Parallel computing is characterized by four types of parallel processing, offered both by proprietary and open source vendors alike:

1. Bit-level parallelism: By increasing processor word size, fewer instructions are required to operate on variables with a greater length than the word.

2. Instruction-level parallelism: In the hardware approach, instructions are executed in parallel based on dynamic parallelism, while in the software approach, instructions are executed in parallel based on static parallelism, which the compiler determines at compile time.

3. Task parallelism: The simultaneous execution of multiple tasks across multiple processors in parallel on the same data, utilizing parallelization.

4. Superword-level parallelism: An inline vectorization technique that makes use of parallelism of inline code.

With the increase in multicore and GPU-based processors, parallel computing has become increasingly important. By combining GPUs with CPUs, applications are able to process more data and perform more calculations simultaneously. Parallelism allows a GPU to complete more work in a shorter period of time than a CPU.

Merits of the system

Scalability: Thanks to added scalability, the new system can accommodate increasing volumes of data more effectively and flexibly. We can easily add more computing units in parallel computing.

Load Balancing – The sharing of workload across various systems.

Virtualization: Using virtualization, users can share system resources effortlessly while ensuring privacy and security by isolating and protecting them from one another.

Read about Instruction Format in Computer Architecture

Issues in the system

- A technical problem prevents resources from responding.

- Virtualization: Using virtualization, users can share system resources effortlessly while ensuring privacy and security by isolating and protecting them from one another.